Today on Twitter, Sahil Malik asked the question “Is there any reason ever to use Request.QueryString over Request.Params?” (link – for as long as it persists on Twitter).

For QueryString itself, there is practically no reason not to use the less specific collection, as QueryString is always checked first. That means that there is a small performance hit in Request.Params as it will check all collections regardless and not one in just calling Request, as it returns the first one it finds.

If you are trying to get at the Forms, Cookies, or ServerVariables collections, there is a performance hit (albeit small) no matter which way you turn. (Information on the happenings inside the .Net Framework are available on this Hanselman post from a few years ago.

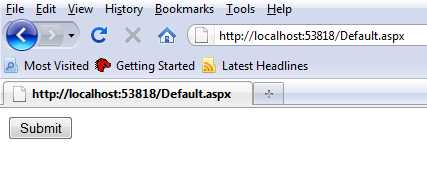

There is an issue, however, if you want to access any of the other collections by using either Request[] or Request.Params[]. And really, this is the code smell that I was worried about when responding to Sahil earlier today. Let’s make an example page here. I made this using Asp.Net WebForms (to hopefully have the widest base of understanding).

Here is the meat of the .aspx page.

<form id="form1" runat="server">

<div>

<asp:Button runat="server" Text="Submit" OnClick="submitButton_Click" ID="submitButton" />

</div>

<asp:Panel runat="server" Visible="false" ID="adminPanel">

You shouldn't see me unless you are an admin.

</asp:Panel>

</form>

Here is the code behind

using System;

using System.Collections.Generic;

using System.Linq;

using System.Web;

using System.Web.UI;

using System.Web.UI.WebControls;

namespace Params

{

public partial class _Default : System.Web.UI.Page

{

protected void Page_Load(object sender, EventArgs e)

{

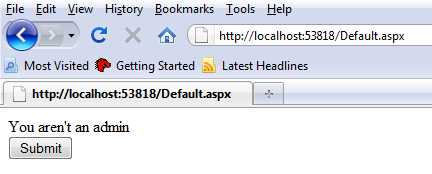

Response.Cookies.Add(new HttpCookie("IsAdmin", "false"));

}

protected void submitButton_Click(object sender, EventArgs e)

{

var value = Request["IsAdmin"];

if (Convert.ToBoolean(value))

{

adminPanel.Visible = true;

}

else

{

Response.Write("You aren't an admin");

}

}

}

}

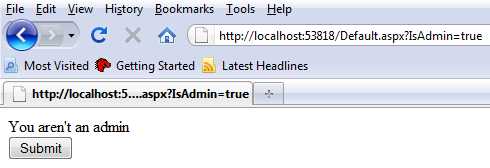

When we click the button, the cookie value is checked and since it is false, we get the sad news that we aren’t an admin.

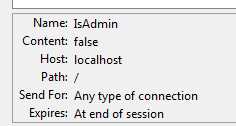

Okay, let’s now assume that someone is curious if you are using simple cookie checks, so they look inside Firefox to see what the cookies are on your site.

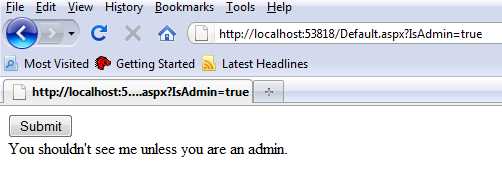

Now, let me take a chance that the operator of this site is being sloppy and is using Request[] instead of calling the value from the cookie collection explicitly. I’ll add ?IsAdmin=true onto the querystring to mirror the cookie and then click the button. Since the QueryString collection is checked first, I’ll be overriding the cookie in this instance.

Here is our result

Well, that’s not good. What if we actually just check the cookie value instead of being lazy?

protected void submitButton_Click(object sender, EventArgs e)

{

var value = Request.Cookies["IsAdmin"].Value;

if (Convert.ToBoolean(value))

{

adminPanel.Visible = true;

}

else

{

Response.Write("You aren't an admin");

}

}

Now when I try the QueryString hack, the page is safe.

This doesn’t solve all of the problems with this page, though. I’m not pushing this off as some airtight secure solution. What I’m hoping to point out is that coding hard and even though we are given certain shortcuts, you really have to understand the ramifications of your code before throwing it out in the wild.

First let me give you a little of the background. At work, we are building a web application. Among other things, we’d like it to be fast. We made many painstaking decisions to build it to be fast. My

First let me give you a little of the background. At work, we are building a web application. Among other things, we’d like it to be fast. We made many painstaking decisions to build it to be fast. My  I’m starting to get involved in

I’m starting to get involved in